Robots-How to Keep in Your Web Site

The spiders or crawlers robots discover the web seeking out and recording all forms of facts. Robotics Training in Coimbatore defines as they generally begin with URL submitted using users, or from hyperlinks, they find on the net web sites, the sitemap documents, or the top level of a website.

You recognize that seeps had been created to assist human beings in discovering information quickly on the Internet, and the search engines acquire an awful lot of their statistics thru robots (also called spiders or crawlers) that look for net pages for them.

Once the robotic accesses the home page, then recursively accesses all pages linked from that web page. But the robot can also take a look at out all of the pages which could discover on a particular server.

After the robotic unearths a web page, it works indexing the title, the key phrases, the textual content, etc. But from time to time, you would possibly want to prevent search engines from indexing some of your internet pages like news postings, and in particular marked web pages (in the example: affiliate´s pages), but whether individual robots comply to those conventions is naturally voluntary.

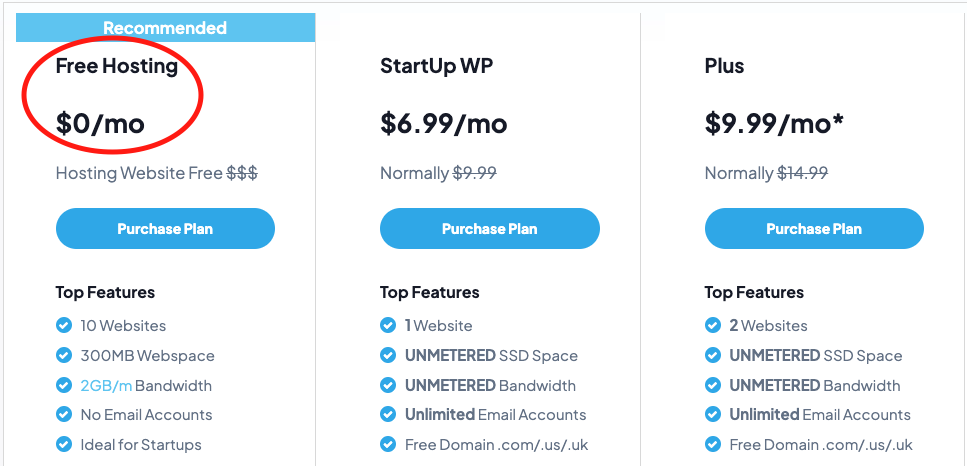

Start New Web Site for $1/mon?

Get $1 Web Hosting – with 99.99% Uptime

Free SSL

Free Domain

Business Email

Robots Exclusion Protocol:

So in case you need robots to hold out from some of your net pages, you may ask robots to ignore the internet pages which you don´t need to be listed and to do this, you may location a robots.Txt file on the neighborhood root server of your web site.

In instance if you have a listing called e-books and also you need to invite robots to preserve out of it, your robots.Txt document has to read:

When you don´t have sufficient control over your server to installation a robots.Txt record, you may attempt adding a META tag to the pinnacle segment of any HTML file.

In instance, a tag just like the following tells robots not to index and now not to follow links on a specific web page:

Support for the META tag among robots isn’t so frequent because the Robots Exclusion Protocol, however, most of the most important net indexes presently help it.

News Postings:

If you want to hold the search engines like google out of your information postings, you can create an “X-no-archive” line in of your postings’ headers:

So be careful because though the robotic and archive exclusion standards may additionally help keep your fabric out of foremost search engines like google and yahoo, there are a few others that recognize no such policies.

If you are pretty involved in the privateness of your email and Usenet postings, you have to use some anonymous remailers and PGP. You can study approximately it right here:

Is your internet web site constantly getting hit through automatic internet robots, causing provider denials and extra bandwidth consumption?

What are computerized net robots?

Automated net robots are essential and commonly a “suitable factor” at the net. An internet robot can be explained as a program that can automate a selected function on the net. For instance, almost all search engines like google use robots to automate the technique of grabbing a duplicate of your internet pages and indexing them so that different Internet customers can find your pages online.

Such a robot could visit your pages from time to time to check in case your pages were updated and re-index if vital. Another example of a robotic would be an application that might comply with hyperlinks on a web page and retrieve all associated pages, photographs, and so on. And make a copy of the net web site for your pc that you may study off-line.

What’s the trouble here?

So what’s the problem, you ask? Most net sites are designed to be studied by way of actual people, not robots. The major distinction is that a person could observe most effective the hyperlinks which might be interesting to her or him and would accomplish that at a “human pace,” at the same time as a robot might be set up to download all connected pages and objects on the one’s pages at speeds constrained simplest by way of the velocity at which the internet server and the Internet connection can serve your pages.

To upload to the problem, some robots may additionally download multiple pages and items at the equal time.

So what? Someone favored my pages sufficient to examine them off-line!

Isn’t that a signal that a person preferred my web pages, to take the hassle of downloading pages to view at a later time? Whether you have got loads of small pages, less variety of large pages or only an unmarried time-ingesting script, a web robotic could probable have tricky side-effects to your site, together with:

Temporary denial of services as a result of heavy bursts of web page downloading. This is wherein other site visitors for your web web site may not be capable of getting right of entry to your pages at the ordinary velocity, or in severe cases not at all. Because a robotic is busy downloading your pages in a heavy burst and consuming the majority of your internet server resources and Internet connection bandwidth.

Excessive consumption of bandwidth. As explained earlier, because the content that robots download might also or might not be beneficial to someone at a later time, the possibilities of downloading content and throwing it away is better.

This can be a waste of your bandwidth. If Automation Training in Coimbatore has to pay on a pay-in keeping with-bandwidth foundation, or when you have daily or monthly bandwidth limits, common visits from robots ought to eat a huge part of this bandwidth.